GitHub Notebook from the video series

Also check out Jake’s awesome, free book: Python Data Science Handbook

Jake Vanderplas has a great video series about reproducible analysis in Jupyter Notebooks. His overall recommendation for using Jupyter:

This is (I think) the minimum set of requirements for a real-time linter ensuring that "Restart and Run All" will work correctly.

— Jake VanderPlas (@jakevdp) November 27, 2017

Before closing out of an analysis session, make sure you can run your notebook from a clean state.

Video Timelines and Notes

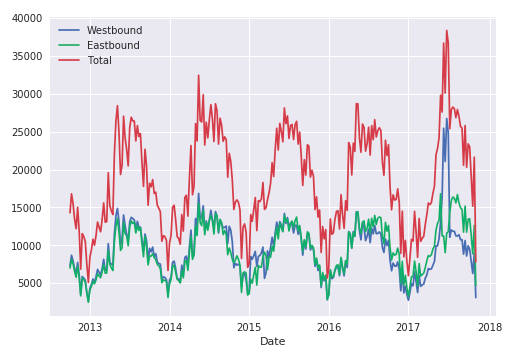

video 1 [5min] - acquiring, loading, plotting data.

- Retrieve data from code; reproducible analysis starts with acquiring the data.

- Use pandas to load data.

- Familiar libraries are often imported with nicknames common in the community

import pandas as pd

video 2 [6min] - exploring data

- explore your data by graphing it from different angels

- matplotlib has built-in styles to prettify plots, including seaborn

- aggregate and groupby, data with pandas data wrangling

- pivot tables are very easy to run and output

video 3 [5min] - what should be saved

- Jupyter is great because we can explore data by jumping around in different code blocks (nonlinearity)

- before saving, linearize your notebook. “Restart & Run All” is your friend

video 4 [6min] - git and github

- don’t check your data into version control (it should be acquired in code, if possible)

video 5 [7min] - turn your code into a python package

- package useful bits of code so you don’t have to c/p into other notebooks

- requires a few bits of code, but nothing complicated

- create

__init__.pyfile in directory that imports objects from w/in that same dir

- create

video 6 [6min] - test your code

- unit tests ensure the results of your methods do what they are supposed to

- is a positive signal to others that your code can be relied upon

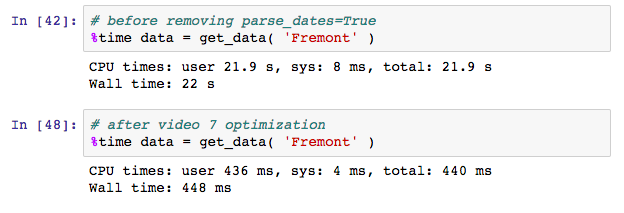

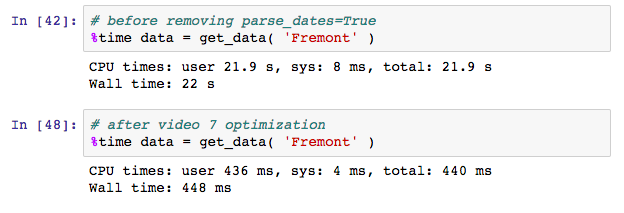

video 7 [6min] - refactoring for speed

- if there’s something common that can be optimized, pandas has a way to do it

- debugging is a learned art. watch the videos to get better at it in your own code

- when you find a bug in your code, that’s a good candidate for unit testing

video 8.5 [8min] - finding, fixing, PR for scikit-learn bug

- pretty neat - watch him find, fix, and submit a PR for a bug in a major library

video 9 [8min] - more sophisticated analysis

video 10 [8min] - cleaning up the notebook

- to go from a Jupyter notebook exploration to a Reproducible Result, and to share with other people, try to linearize your notebook. Jake’s tweet is pretty straightforward here:

Idea: Jupyter notebooks could have a "reproducibility mode" where:

— Jake VanderPlas (@jakevdp) November 27, 2017

1) Code cells are read-only once executed

2) New code cells cannot be inserted above previously executed cells

3) No cell can be executed until all previous cells are executed

In short, think about how other people, including yourself at your next session, will likely approach your code. It’s great to explore in a non-linear fashion – that’s part of the power of this notebook IDE – but try to tidy up after yourself, even if it’s for your own sake.